Good navigation can make a site feel effortless, while poor navigation buries your best pages — and that contrast matters for crawlability and indexing. You’ll want to keep important pages within a few clicks of the homepage, use clear internal links, and avoid orphaned content so search bots find and re-crawl key pages more often. There’s more to check — from depth limits to renderable links — that’ll determine how well your site gets discovered.

Why Crawlability and Indexability Matter for Navigation

Because search engines rely on links to discover pages, a clear navigation structure makes it far easier for bots to crawl and index your site, ensuring important content isn’t buried or missed.

You’ll boost crawlability and indexability by keeping key pages within 2–3 clicks of the homepage and using consistent internal linking so link equity flows where it matters.

A logical site architecture reduces broken links and orphaned pages that block indexing and cost you organic traffic.

When navigation supports user experience, engagement improves—time on site rises and bounce rates drop—sending positive signals to search engines.

Run an SEO audit regularly to spot navigation issues, fix crawlability gaps, and preserve indexability for all critical pages.

How Site Depth and Click-Paths Affect Crawl Frequency

One simple rule governs crawl frequency: the fewer clicks from your homepage to a page, the more often search engines will visit it.

You should treat site depth as a visibility signal: pages buried deep in subfolders get crawled less, harming indexability and search engine visibility.

Adopt a flat site architecture so key pages live within 2–3 clicks; that improves crawl frequency and overall crawlability.

Optimize click-paths and navigation to reduce hops between pages, and crawlers will discover and index content more efficiently.

Following SEO best practices, prioritize making important pages reachable quickly rather than hiding them behind long link chains.

Which Pages Should Be Top-Level (Near the Homepage)

Which pages should sit closest to your homepage? You should feature top-level pages that house important content driving business value: core service pages, product categories, contact and about us, plus current promotion landing pages.

Prioritize high-traffic pages so they’re reachable within 2–3 clicks, improving user experience and helping search engines crawl efficiently.

Design site structure so accessibility is clear and logical, and use internal linking thoughtfully to spread link equity across key sections without clutter.

Keep the menu focused to avoid diluting authority; surface pages that convert or inform users fastest.

Finally, make a habit of regularly auditing which top-level pages are performing, so you can swap or reorganize items as user needs and search trends evolve.

Internal Linking Patterns That Boost Indexation and Authority

While designing your site’s architecture, make internal linking a deliberate strategy rather than an afterthought: clear, contextual links help crawlers find and index pages faster and let you channel link equity to the pages that matter most.

You should map an internal linking strategy that blends logical site structure with user experience, using contextual anchor text to signal relevance for crawlability and indexation.

Ascertain no orphan pages exist by linking every page from at least one related hub, and prioritize links to high-value content to concentrate link equity effectively.

Regular audits will uncover broken internal links and outdated paths so you can fix them promptly, preserving crawl budgets and site authority.

Consistent internal linking boosts SEO while improving navigation for users and bots alike.

Fix Blockers: Robots.Txt, JS Rendering, and Redirects

If crawlers can’t access your pages, nothing else matters — start by checking robots.txt, JavaScript rendering, and redirects to remove barriers to indexing.

You should audit robots.txt to verify it isn’t blocking important URLs and confirm search engine crawlers can fetch resources needed for rendering.

Test JavaScript rendering so dynamic content appears to bots; if it doesn’t, serve prerendered HTML or adjust scripts to expose links and content.

Review redirects to remove chains and loops that bloat the crawl path and waste crawl budget.

Avoid excessive nofollowing that prevents link equity flowing to deeper pages.

Run regular audits (for example with Google Search Console) to catch issues early, restoring crawlability and improving indexing and visibility in search results.

Measuring Crawlability: Metrics and Tests to Validate Changes

You’ve removed blockers like robots.txt bans, broken redirects, and rendering problems — now you need to prove those fixes actually improved crawling.

Use Google Search Console to track indexing, monitor crawl errors, and confirm how many pages are indexed. Check your XML sitemap for completeness so search engine bots can discover important pages.

Review server logs to see bot frequency, which URLs they request, and any remaining barriers. Run SEO audit tools to surface structural problems, broken links, and orphan pages that still hinder crawlability.

Measure page load times because slow pages can reduce crawl rates.

Combine these metrics into a repeatable test plan: baseline before changes, apply fixes, then re-measure to validate improved crawlability and indexing.

Frequently Asked Questions

What Is Crawlability and Indexing?

Crawlability is how easily bots can access your site; indexing is how search engines store and organize crawled pages. You should optimize links and structure so bots can find, crawl, and index your important content reliably.

What Is the Impact of Crawlability on SEO?

Crawlability directly affects your SEO because it determines whether search engines can find and index your pages; if bots can’t crawl, your content won’t rank, so you’ll lose visibility, traffic, and potential conversions.

Which Strategy Would Help You Improve Your Site’s Crawlability?

Like pruning a tangled vine, you’ll flatten site architecture so key pages sit within 2–3 clicks, build clear menus and contextual internal links, submit an updated XML sitemap, and regularly audit for broken links to boost crawlability.

What Is a Navigational Structure?

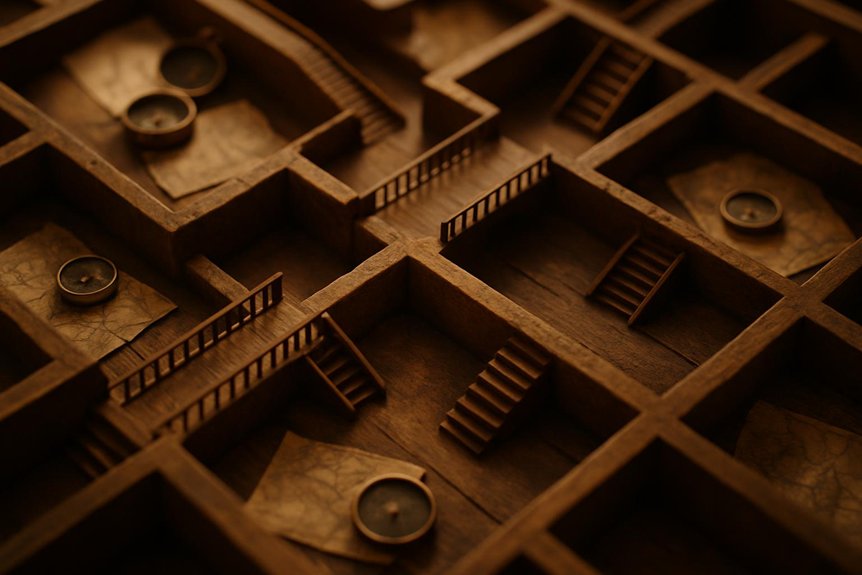

A navigational structure is the organized layout of your site’s menus, links, and hierarchies that helps users and search engines find pages quickly; it groups content, reduces click depth, and supports clear internal linking throughout your site.

Conclusion

You’ve seen how navigation shapes crawlability and indexing, so prioritize clear hierarchies and short click-paths to make key pages easy to find. Put high-value pages near the homepage, use internal links to pass authority, and fix blockers like robots.txt, JS issues, and redirects. Measure changes with crawl stats and indexing reports to validate improvements. A well-structured menu is like a lighthouse for crawlers, guiding them quickly to your most important content.